Beyond the Context Window: Implementing CoALA for State-Aware Enterprise Agents

1. Summary

The Problem: Statelessness in Enterprise Workflows Large Language Models (LLMs) are powerful reasoning engines, but their utility in business environments is significantly constrained by their inherent statelessness. In a standard deployment, an agent resets after every session, retaining no context of user preferences, specific project history, or previously corrected errors. This lack of persistent state forces users to redundantly provide context and correct the same mistakes across multiple interactions, capping the efficiency gains that agents can provide.

The Goal My objective was to evolve the agent from a transient session-based tool into a persistent Institutional Asset—a system that retains operational context, learns from user feedback, and improves its baseline performance over time.

The Solution To achieve this, we implemented an architecture based on the CoALA (Cognitive Architectures for Language Agents) framework. By engineering a background memory processor (the "Hippocampus") and a dynamic context injection layer, we created a system that persists experience and democratizes institutional knowledge (a learning agent). This article details the technical implementation of that architecture.

2. The Constraint: Managing the Context Window

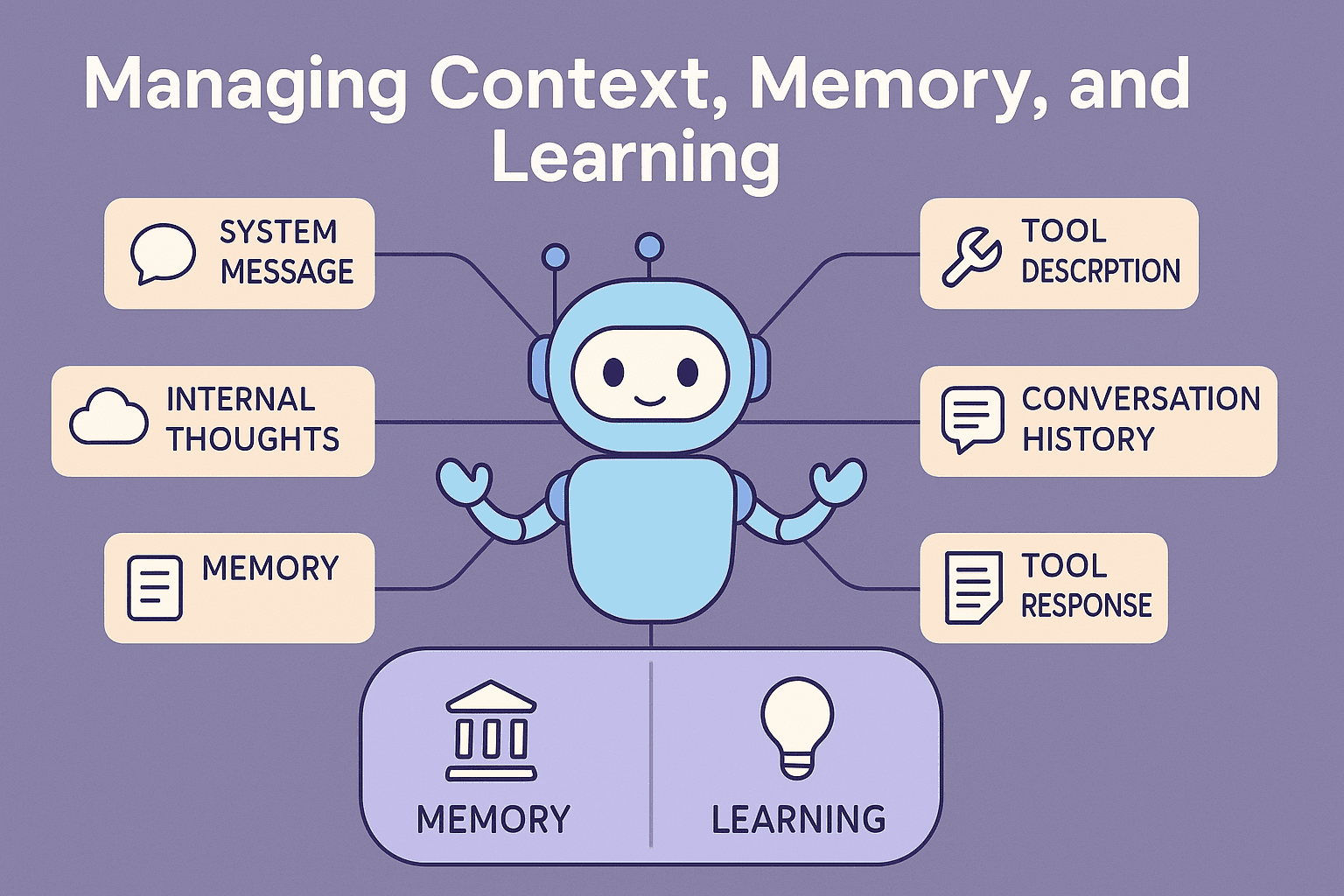

Communication with an agent is governed by the Context Window, which effectively functions as the agent's working memory. This window is finite, measurable in tokens, and determines the scope of data available for immediate reasoning.

fig 1: The agent context window.

Standard implementations often utilize a "sliding window" approach to manage this limit, where the oldest messages are discarded as new ones arrive. In a complex workflow, this leads to Contextual Drift—the loss of critical instructions or project constraints established early in the session.

The Fix: Context Engineering via Summary Injection To mitigate data loss, we rejected the sliding window in favor of a structured Context Stack that prioritizes information relevance over recency:

The System Layer: Contains immutable behavioral instructions and guardrails.

The Summary Layer: Rather than discarding history, the system compresses prior turns into a high-density "Summary Message." This preserves the global context of the session without consuming the token budget of raw logs.

The Active Thread: The most recent interactions are retained in high fidelity to facilitate immediate reasoning.

This shift allowed us to move from a linear log of text to a cyclic learning system.

3. The Process: Asynchronous Episode Extraction

Preserving the current session is only the first step. The critical challenge is capturing lessons from past sessions. To achieve this, we developed an asynchronous background process, internally referred to as the Hippocampus, that executes when a conversation reaches a natural pause.

This process transforms unstructured chat logs into structured Episodes. However, a simple log dump is too noisy. To extract meaningful signal, we apply a strict segmentation logic based on our "Swarm" architecture.

Step 1: Segmentation (Defining Boundaries) Our system utilizes a swarm of specialist agents, each equipped with specific tools. We use these natural architectural divisions to slice the conversation stream:

Conversation Boundaries: Defined by Agent Changes. When the routing layer switches from the "Coder Agent" to the "Billing Agent," the current Conversation object is closed and a new one begins.

Topic Boundaries: Defined by Entity Changes. Within a conversation, if the user pivots from "Project Alpha" to "Project Beta," we detect this entity shift (via spaCy) and create a new Topic node.

Step 2: Classification & Validation Once boundaries are established, we extract granular metadata for every message, including Domain, Operation, and Speech Act (Question, Command, Comment).

Finally, we employ a reasoning model to validate the extracted segments against strict rules. The primary acceptance criterion for an Episode is that a set of actions must culminate in a tangible result. If a segment is just "chatter" without an outcome, it is discarded. If the validation fails (e.g., the result is ambiguous), the model receives feedback and retries the extraction.

This rigorous filtering ensures that our Vector Database is populated only with high-value operational patterns, rather than noise.

4. The Feedback Loop: Episodic Injection

The existence of stored memory is insufficient; the agent must be architected to retrieve and apply it contextually.

We evaluated several retrieval strategies. Chain of Thought (CoT) prompting often proved too rigid; if a retrieved memory did not perfectly align with the current scenario, the agent would hallucinate constraints or fail to adapt.

The Solution: The "Memory Message" We implemented a pattern of Episodic Injection.

Retrieval: When a user initiates a prompt, the system queries the vector database for semantically similar past Episodes.

Injection: Relevant Episodes are formatted into a dedicated Memory Message and injected into the Context Stack prior to the user's new prompt.

Operational Example:

User Request: "Generate a SQL query for the User Analytics table."

Memory Injection: "Observation: In a previous session regarding 'User Analytics', the user rejected a query for lacking index hints. Result: Negative."

Agent Action: The agent preemptively includes index hints in the generated SQL, avoiding the previous error.

5. The System Architecture

By integrating these components, the architecture transitions from a linear input-output model to a cyclic cognitive system.

The Context Window manages immediate execution.

The Hippocampus manages consolidation (writing experience).

The Injection Layer manages retrieval (reading experience).

This creates a closed loop: Sense → Reason → Act → Learn.

The business value of this architecture extends beyond efficiency. It effectively captures Tacit Knowledge, the unwritten, experiential knowledge of senior staff and structures it. This allows junior team members to benefit from the accumulated experience of the organization automatically, as the agent retrieves "senior" strategies to guide "junior" requests.

6. Future Development

This implementation establishes the foundation for a state-aware agent. Our roadmap focuses on three advanced capabilities:

Topic-Based Retrieval: Moving beyond semantic similarity in individual prompts to analyzing the broader topic or domain of a conversation, enabling the retrieval of strategic context rather than just tactical corrections.

Innovation via Negation: Rather than prescribing a specific path, we aim to retrieve "Failure Modes" to define a boundary of negative constraints. This allows the agent to innovate within the solution space while strictly avoiding known pitfalls.

Synthetic Best Practices: Pre-loading the vector database with "Synthetic Memories" derived from corporate documentation and policy. This would provide a newly deployed agent with a baseline of institutional competence immediately upon activation.